The idea of homeownership is deeply embedded into the narrative of the modern middle class. Owning a home is almost synonymous with controlling one’s own destiny, financial security in retirement, and setting up roots in a community.

For more than a century, politicians have encouraged this mentality and pushed policies that shift people into homeownership over alternative means of residence and investment. In America specifically, this culture has led to imprudent investing, regressive wealth distribution, and a perpetuation of inequality. America needs to get over its cultural obsession with homeownership and the accompanying policies that ostensibly promote it.

Homeownership as an investment

In the wonderful story of homeownership, families that plunk down money into home equity over the course of a 30-year mortgage are promised a comfortable nest egg by the time they reach retirement. Encouraging Americans to save more is a worthy goal, but investing in a home often fails to deliver on the storied promise. Putting significant savings into home equity is the equivalent of putting all of one’s eggs into one basket and it’s a basket that cannot move its physical location. Stock prices go up and up over the long term with bumps along the way, so anyone who can ride out the ups and downs will do well over a time span like thirty years. Yet unlike an S&P ETF, a house cannot always wait until retirement to be sold. Families need to uproot themselves and move for a variety of reasons. When this situation arises, they may find themselves in the middle of a down housing market. And as we saw in 2008, housing prices are not guaranteed to go up over time.

Policies are regressive and destabilizing

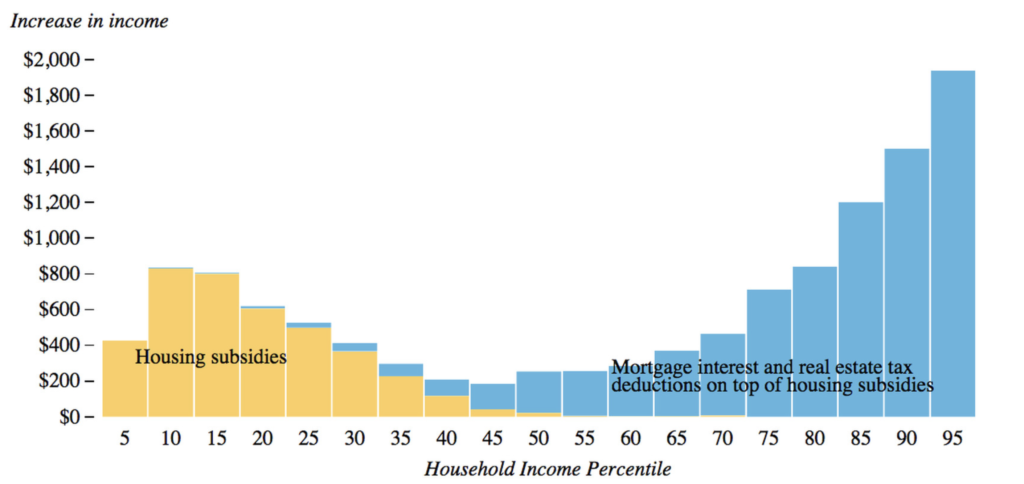

Agencies, legislation, and fiscal policies have been set up in the name of promoting homeownership. Perhaps the most significant is the mortgage interest deduction (MID). By deducting the interest paid on a mortgage from their taxes, families are incentivized to take out more expensive mortgages than they’d otherwise without the deduction. Politicians sometimes defend the policy as helping the group of people just on the margin of being able to afford a home. This tax deduction, the thinking goes, will give them that extra boost that brings them into that exciting club of homeowners. Regardless of intentions, this has led to American housing policy emphasizing wealthy homeowners rather than those on the border of rental and ownership.

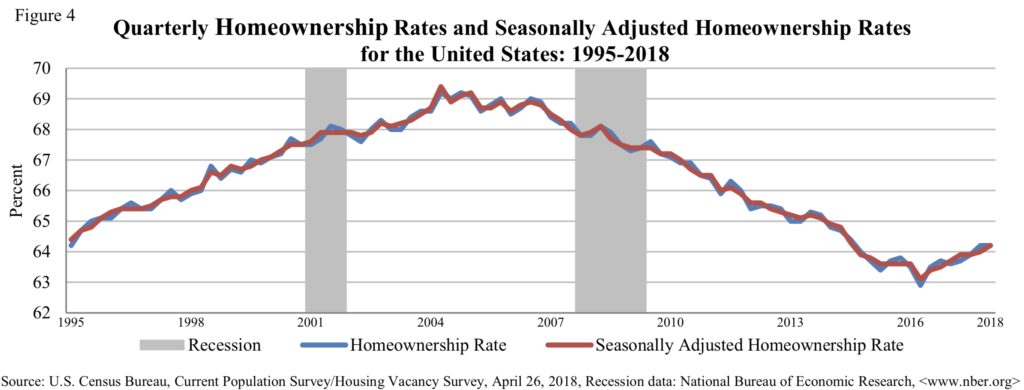

Policies like the MID also serve to destabilize our financial system. As Brink Lindsey and Steven Teles argue in their recent book, The Captured Economy, current housing policies encourage an over-reliance on debt that makes for a massive house of cards in the financial system. All the while, homeownership rates have barely budged from their 1980 levels.

Compared to a more targeted policy like down payment subsidies based on applicants’ income, the MID bloats purchases across the spectrum and means even slight moves in the economy can cause a wave of foreclosures. The MID is so embedded in middle class society that it’s a political non-starter when it comes to reform or abolishing a law that almost all economists believe is bad.

Aggravate NIMBYism

By tying families to homes that are immobile and a significant amount of their savings, homeownership pressures people to move heaven and earth to preserve the value of their homes. This perpetuates NIMBY – Not In My BackYard – policies that often serve a narrow group of residents over the common good.

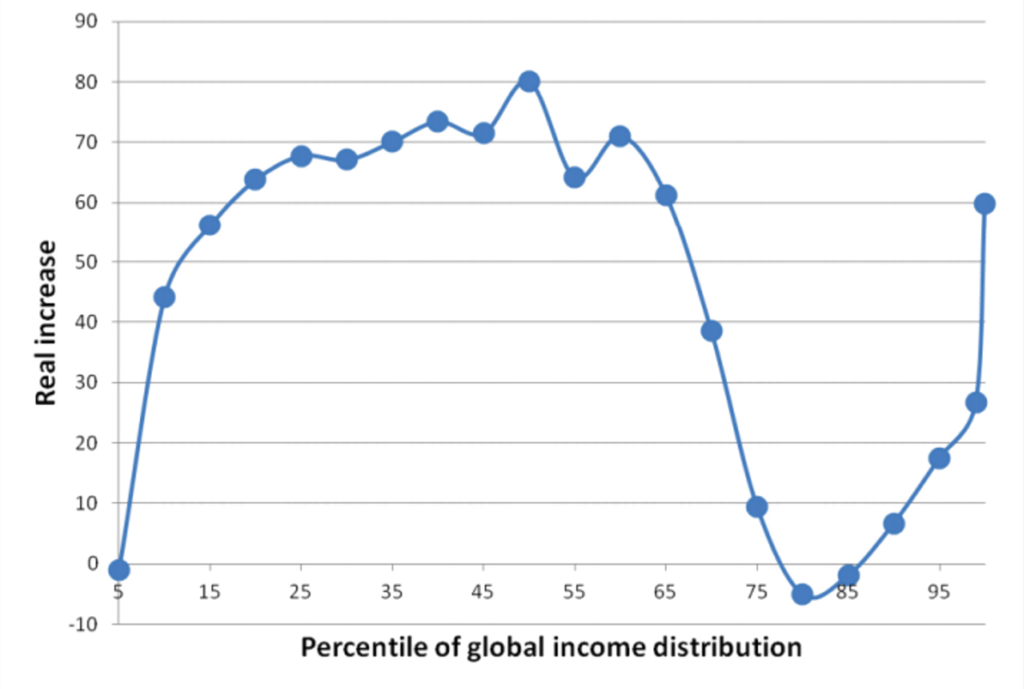

When a city like San Francisco sees a massive increase in demand for housing due to an economic boom, construction of new housing is often choked off in part because the existing residents know an increased supply will lower their home values. The residents who would like to live in San Francisco but cannot afford it have no political clout compared to the current residents. Shutting people out of productive hubs like Silicon Valley perpetuates inequality by keeping lower-income people in low productivity areas and giving owners of capital more wealth.

As Mehrsa Baradaran has noted, NIMBYism can drive otherwise progressive people to go to extensive lengths to preserve their home values. Existing homeowners, because of the potential of the policies to lower home values, often vehemently oppose policies that encourage integration of neighborhoods and schools. Although the policies may have uncertain effects on housing values, the risk involved with changing the character of their neighborhood and education system is too great to endure when it comes to their retirement nest egg. The existing public education landscape in America, tying school funding and choice to property taxes and zip codes, is strongly kept in place by a desire to preserve property values.

Every area needs to put undesirable infrastructure like garbage dumps, sewage plants, or prisons somewhere. Because people are so closely tied to the value of their homes, political pressure is placed on politicians to place these structures far away from the highest-value homes rather than what make most sense for the community. Now whether one is a renter or an owner, no one wants to be next door to a toxic waste dump. But the motivation to make sure it’s not in Your Back Yard is much higher when you’re an owner compared to if you’re a renter with much more mobility. Simply put, the risks involved to your property value from any change in your neighborhood can be so daunting that the political equilibrium is just to maintain a stagnant status quo.

Racial Wealth Gap

The racial wealth gap in America today is staggering. The median white household has $171k in wealth compared to $17.6k for the median black household. Matt Rognlie, now at Northwestern University, separated the different kinds of wealth making up the prolific dataset in Thomas Piketty’s Capital in the 21st Century that described the increasing inequality in wealth. For America, he found that housing alone could essentially explain this entire divergence of wealth. If we are going to be serious about narrowing the racial wealth gap, we need to reconsider housing policy in America and recognize that our current policy scheme that claims to encourage homeownership but instead perpetuates NIMBYism and bloats the housing market is a significant contributing factor.

Alternatives

Without an emphasis on homeownership, would Americans stumble into retirement without a nest egg and live in communities where ever-transient families shied away from setting up roots and investing in local social institutions? Germany and Switzerland, compared to the American rate of around 65%, have homeownership rates of around 40%. These countries have different policies and cultures that can substitute for positive effects of homeownership, but they certainly aren’t community-less dystopias or non-saving wastelands. A gradual transition to less ownership and more rentals in America is possible and would improve the finances and social cohesion of the country.